Writing In-Product Surveys

Tips for creating custom in-product surveys

While most basic survey best practices are consistent no matter how you field a survey, in-product studies are a unique case for which some additional rules apply.

Unlike typical surveys, in which users respond at their convenience, an in-product study asks users to stop what they are doing and pay attention to something else. For this reason, your primary goal when crafting an in-product study, aside from getting your key questions answered, is to make it easier for your users to take your study than to dismiss it.

Following the guidelines below will help you achieve this goal, and in turn, you will maximize your response rate, minimize disruption to your users, and obtain high-quality data for your critical business questions.

Make it easy to understand

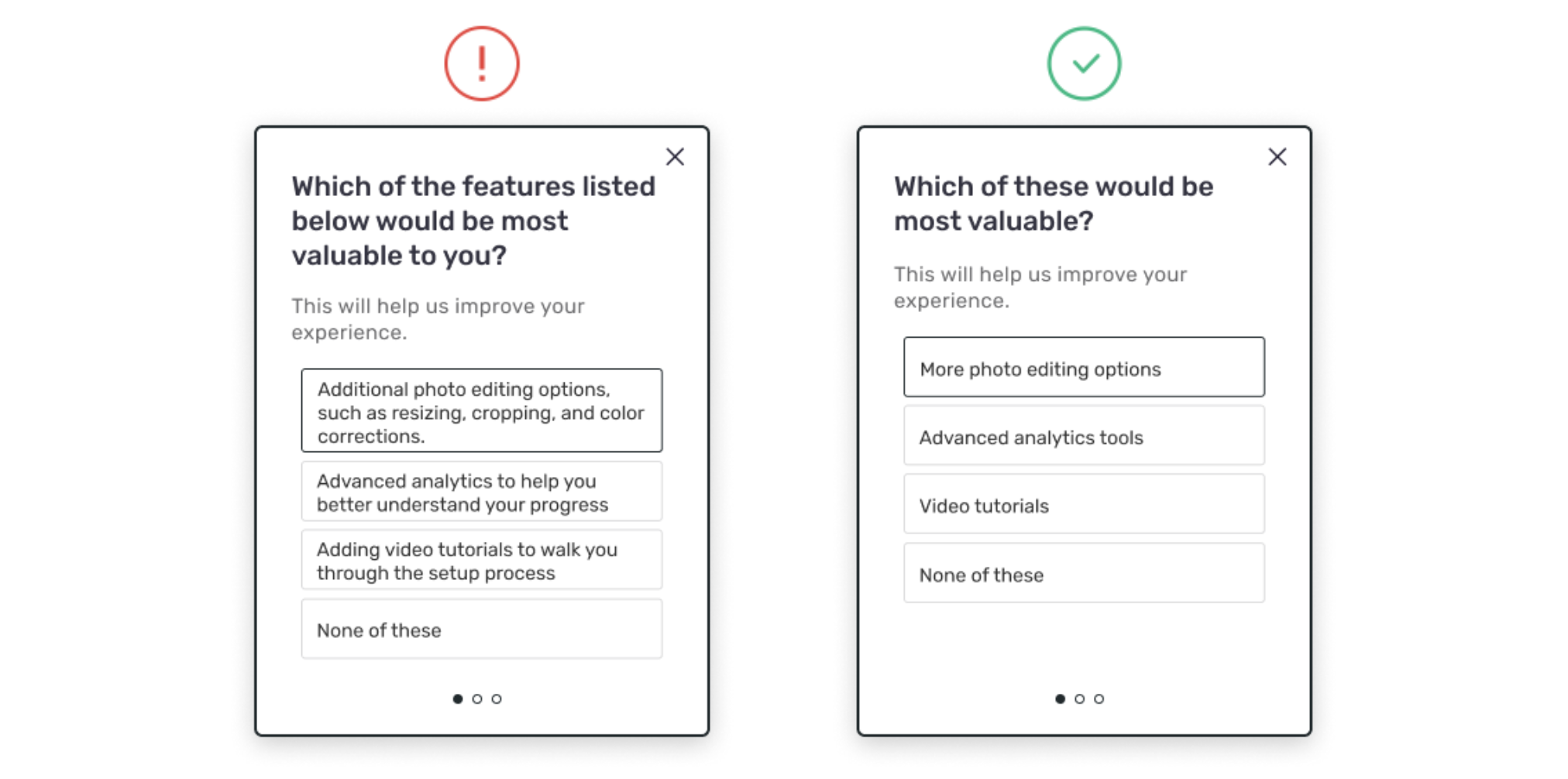

The first step in making your study easy to take is making sure your questions (especially the first one) are easy to read and instantly understandable. If it is difficult to interpret or overly wordy, visitors are likely to dismiss it and move on. Aim for a third-grade reading level, although sometimes achieving this is harder than it seems. At Sprig, we like to run our study questions through the Hemingway app to get a gauge on the difficulty and adjust as needed before launching a new study.

Make it easy to answer

In addition to being able to quickly understand your question, it's critical to make questions easy to answer. This means minimizing the usage of high-effort questions--particularly as the first question in your study.

High effort questions include:

- Open ends, which require users to construct a thoughtful response.

- Sensitive questions, such as personal or financial information, that some users may feel uncomfortable answering.

- Questions that ask users to imagine a scenario that may not apply to them.

Now, it's worth noting it is suggested to minimize high-effort questions, not eliminate them. Open ends, for example, provide exceptionally valuable insight and we absolutely recommend using them where appropriate. However, it's important to be thoughtful about how many to include in your study and when to surface them, as open-ended questions typically receive lower response rates than closed questions.

In particular, it's best not to start your study with an open-ended question. While it's certainly possible to do so and still obtain responses, it's often more effective to lead with a simple rating scale or multiple-choice question that requires minimal effort and gets your foot in the door. Users are then more likely to feel committed and follow through on your second question, even if it's an open end. The same approach would apply to other high-effort questions, such as sensitive questions.

Make it Relevant

When conducting in-product study, it's important to ask the right question at the right time. That means asking questions that are relevant to what users are doing at their stage of the journey, or on the specific page URL where you're reaching them. For example, you should try to avoid asking users who have been active for months about their onboarding experience or asking users on an account settings page how they feel about your search experience. Instead, questions about onboarding should ideally be targeted to users who are currently in the onboarding process or have just completed it. And if you're interested in the search experience, the best place to target users is on the search results page.

Ask your most important questions first

For in-product survey, it’s crucial to get the information you want the most right away, in the first 1-2 questions. This is because, generally speaking, response rates decline with each additional question, and this is particularly true for open-ended questions. It's often better to launch 2 separate, shorter studies than one longer study with critical questions at the end. They will receive less notice and have a substantially lower rate of response.

Keep it short

The response rate declines for each additional question you ask, so choose your questions wisely. While you can include up to 5 questions in your studies, we recommend no more than 3 questions for in-product studies. If you need to ask individual users more than 3 questions, it's probably better suited to a traditional survey.

Updated 3 months ago